1.Установка prometheus

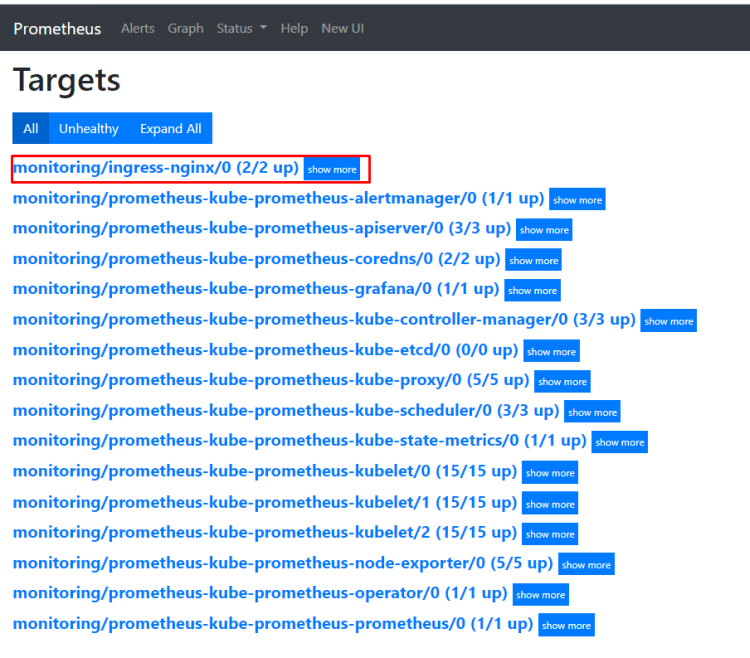

2.exporter nginx(ingress-controller)

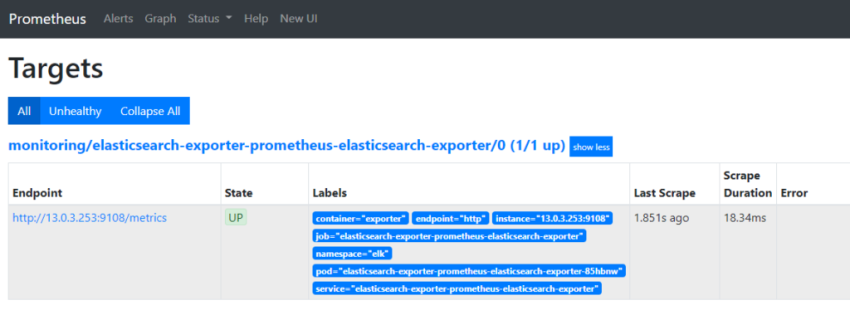

3.exporter elasticsearch

4.exporter rabbitmq

5.exporter redis

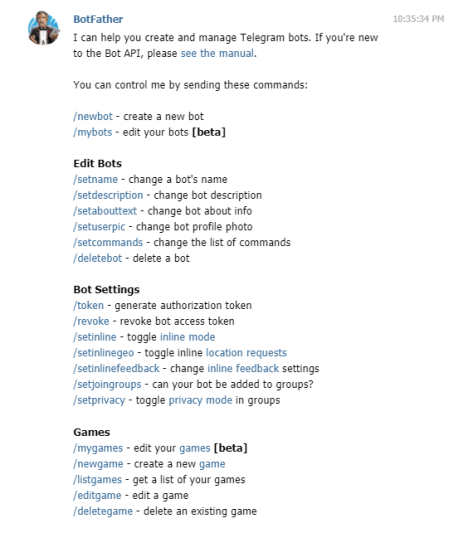

6.настройка оповещений в telegram

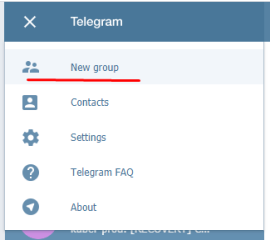

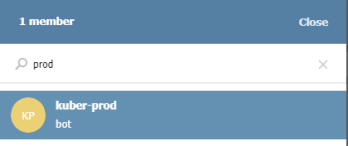

6.1 настройка оповещений в telegram в различные чаты(группы)

6.2. настройка оповещений в telegram разграничение оповещений по группам (исключения уведомлений)

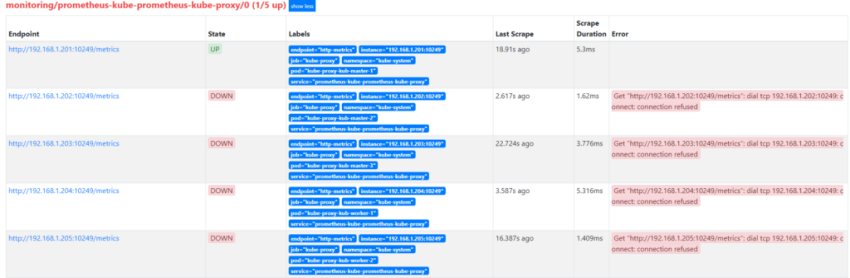

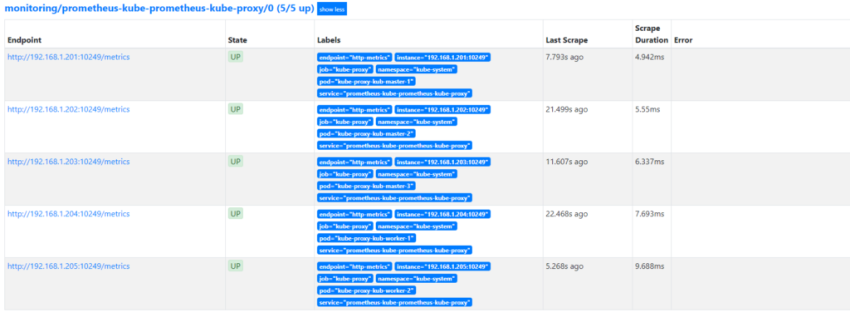

7.Проблема с prometheus-kube-proxy

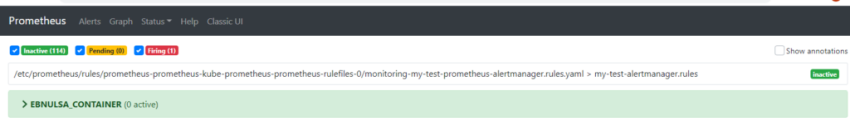

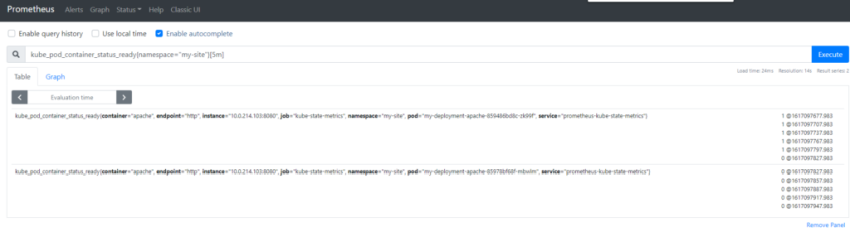

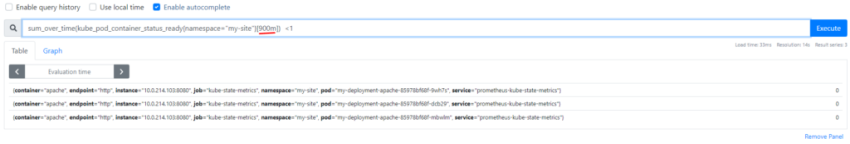

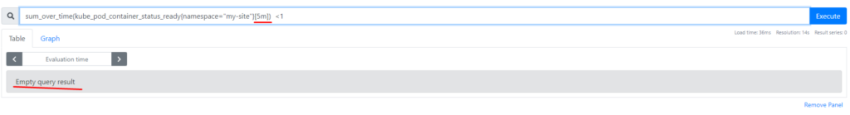

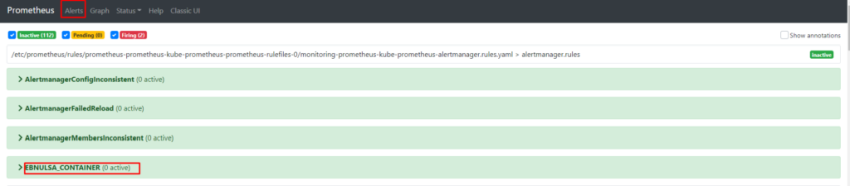

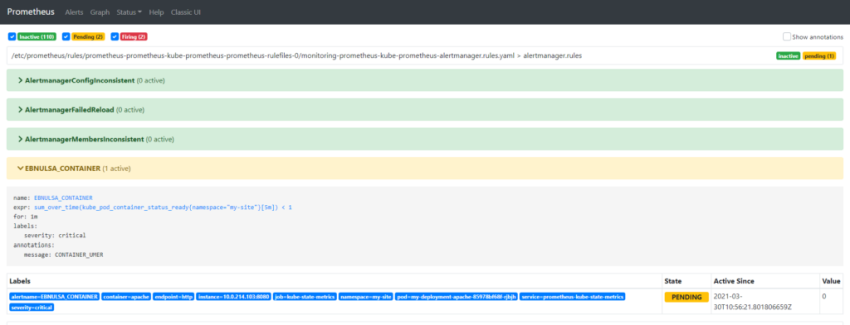

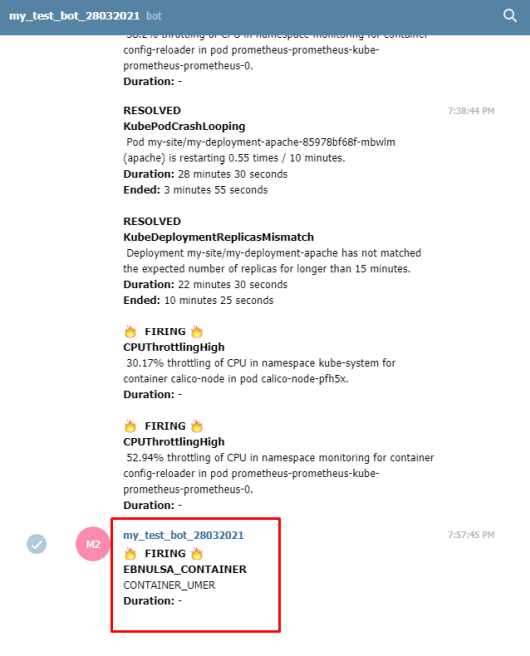

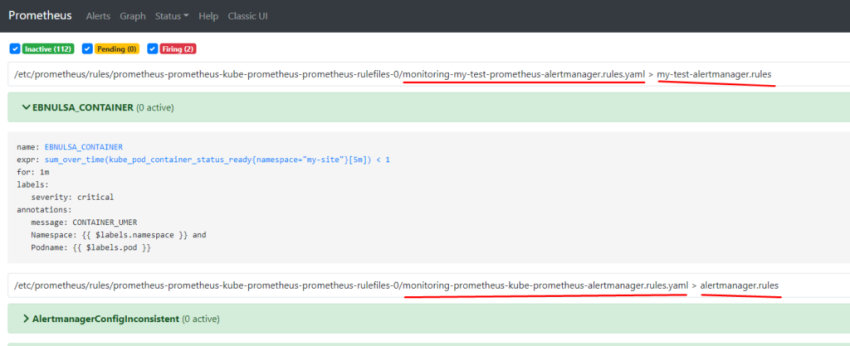

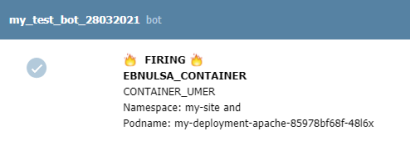

8.Настройка алерта для определённого неймспейса

9.Добавление оповещений и по email

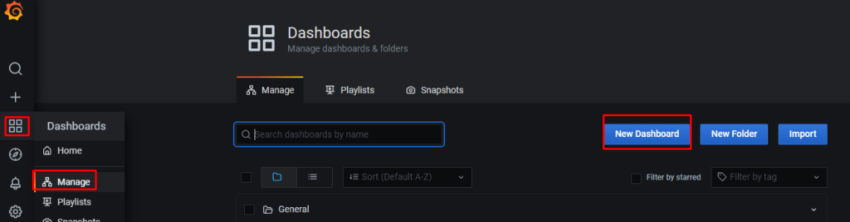

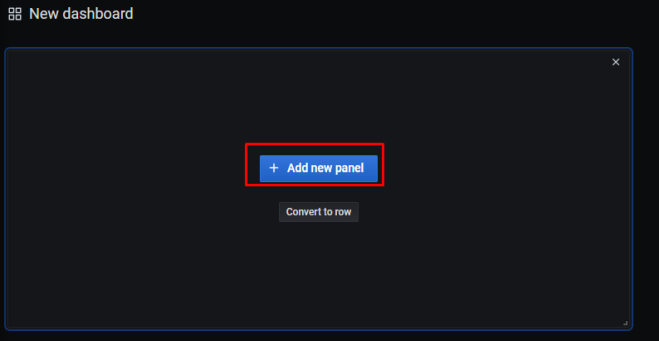

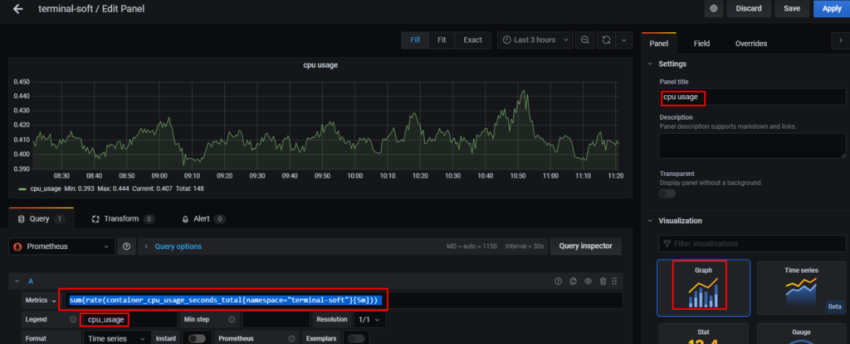

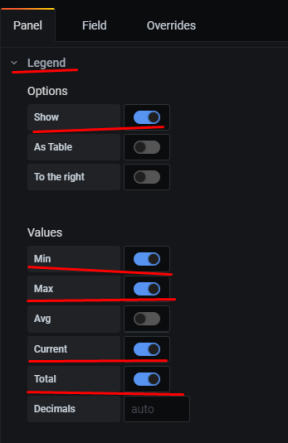

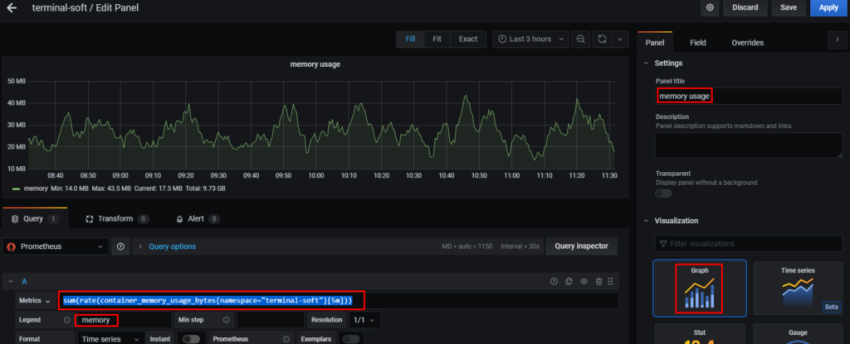

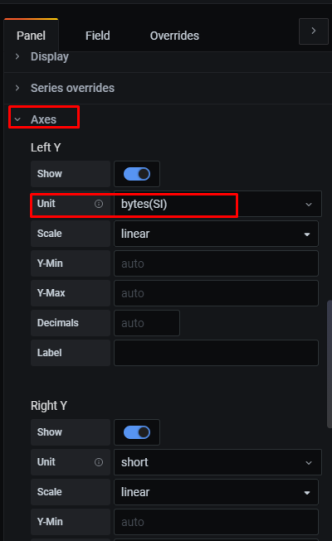

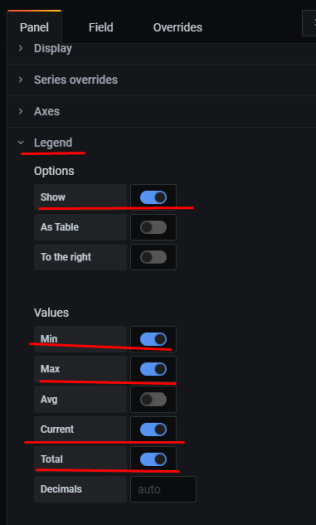

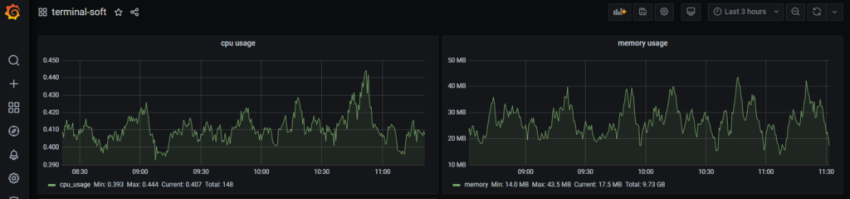

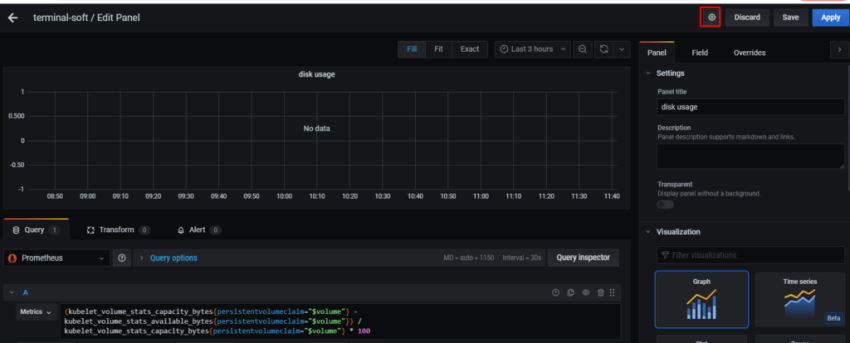

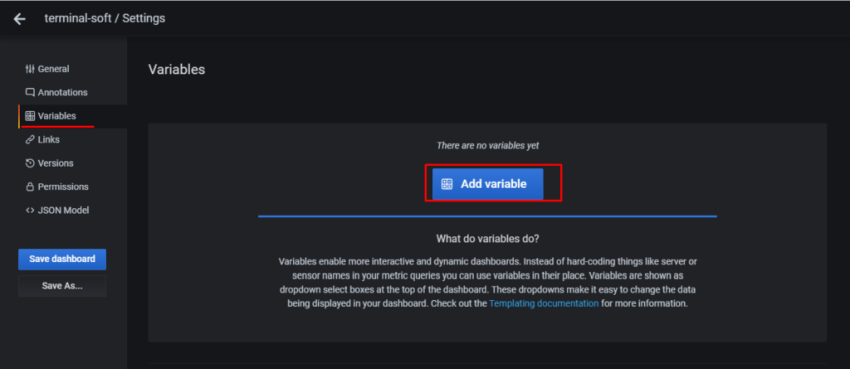

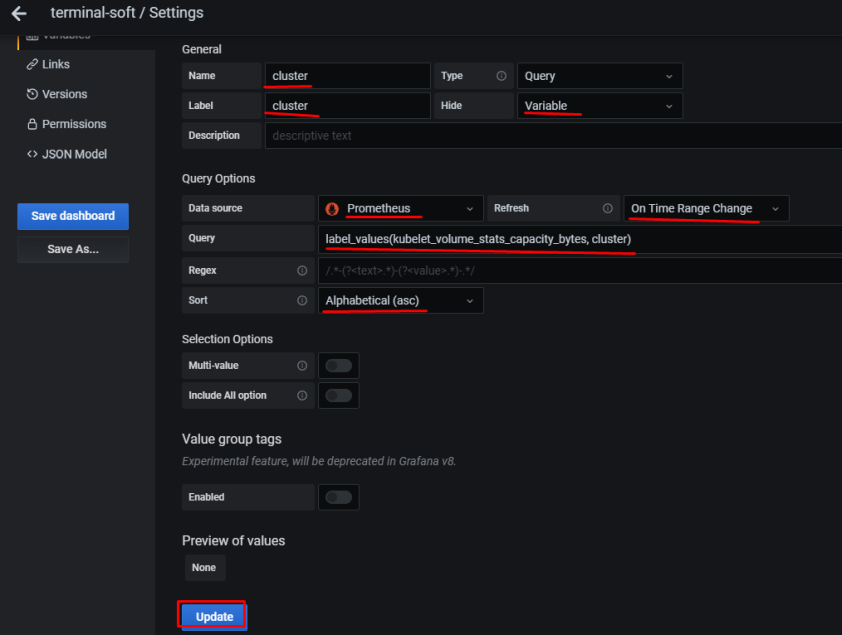

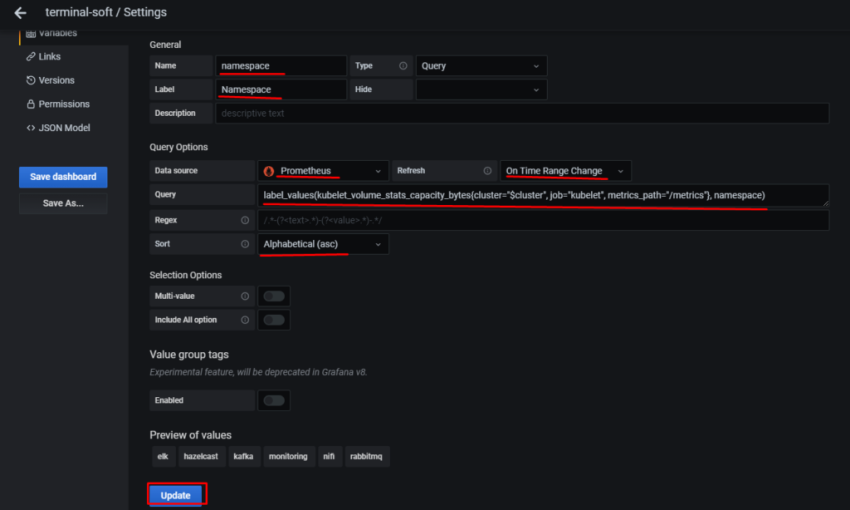

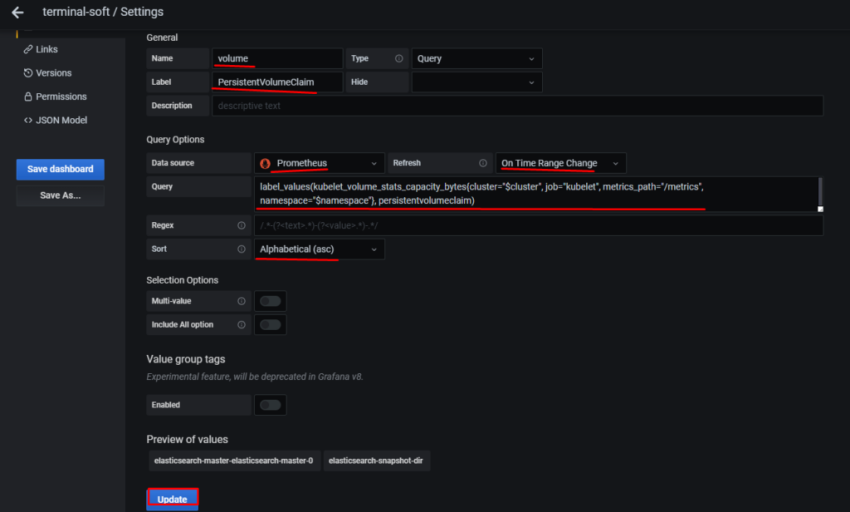

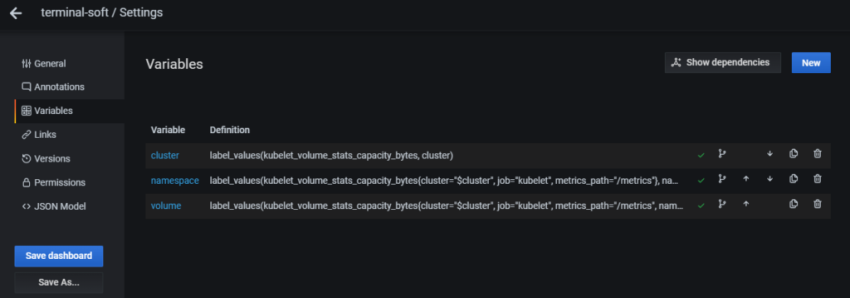

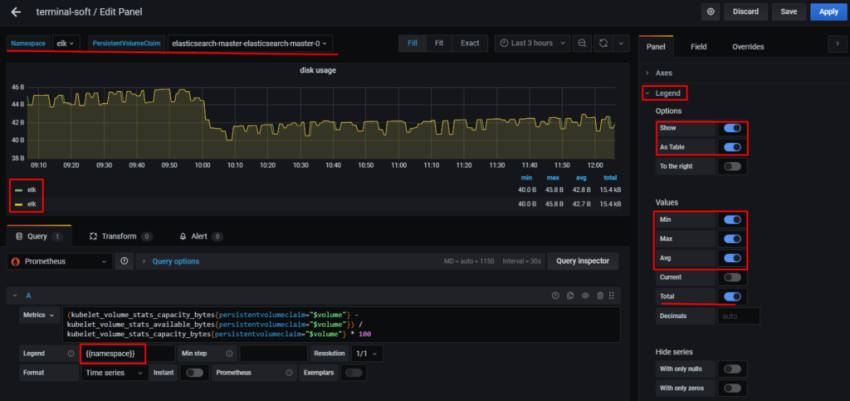

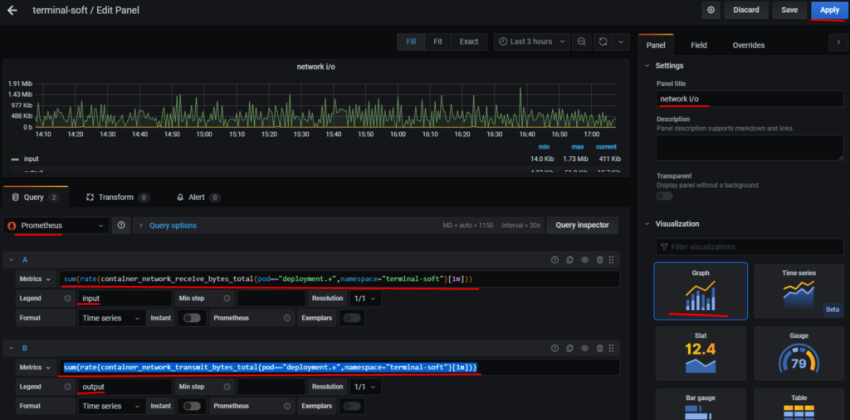

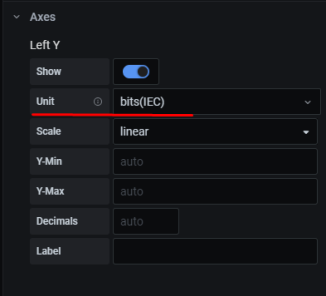

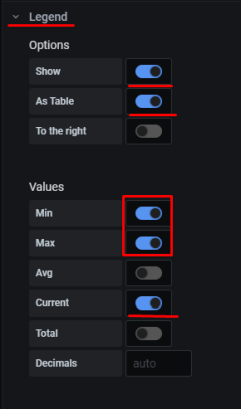

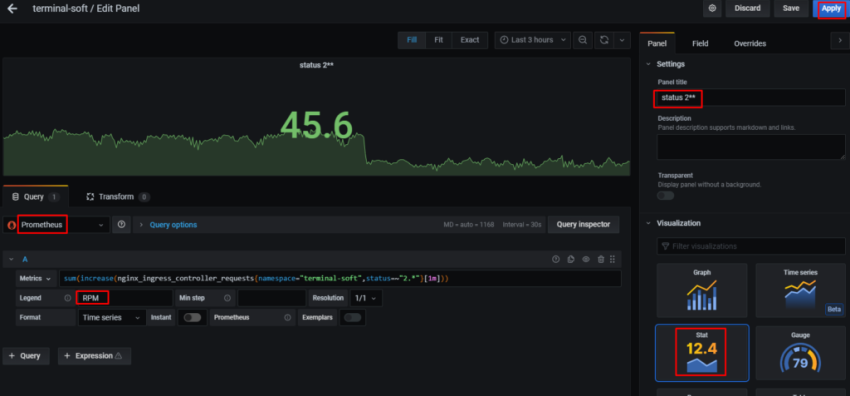

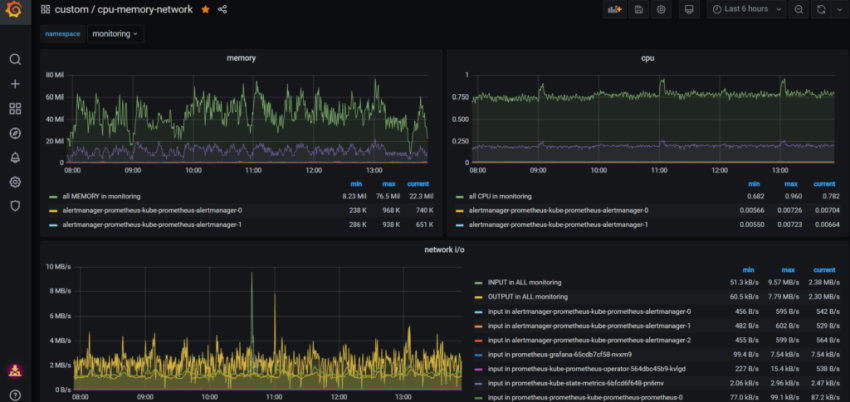

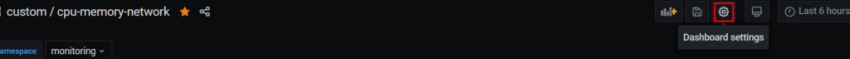

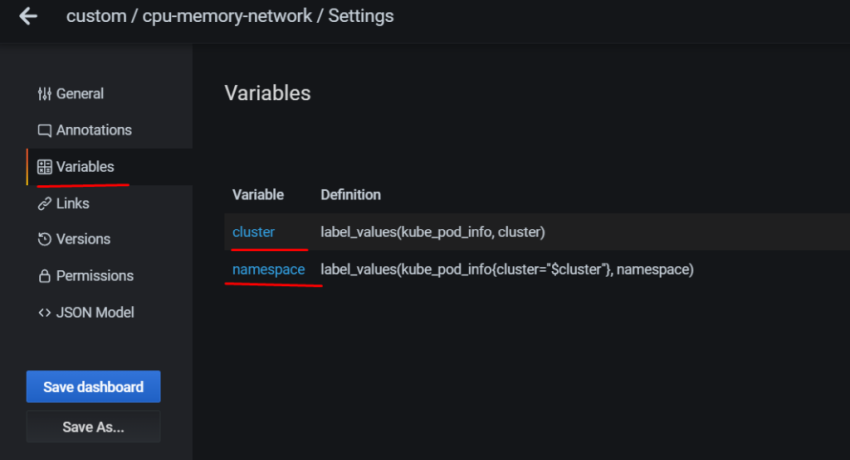

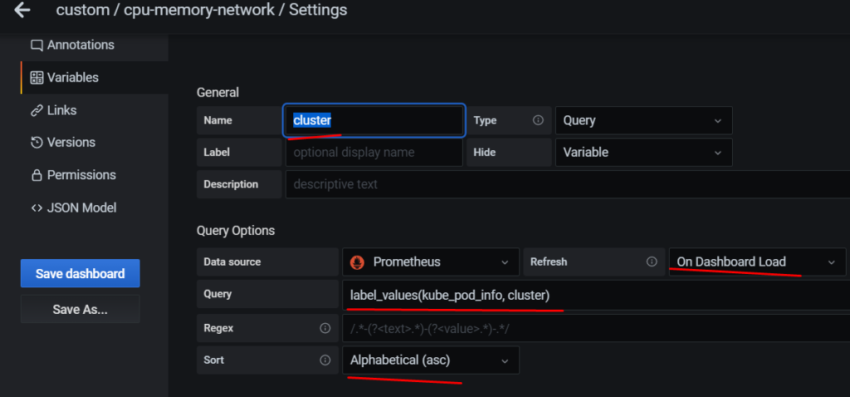

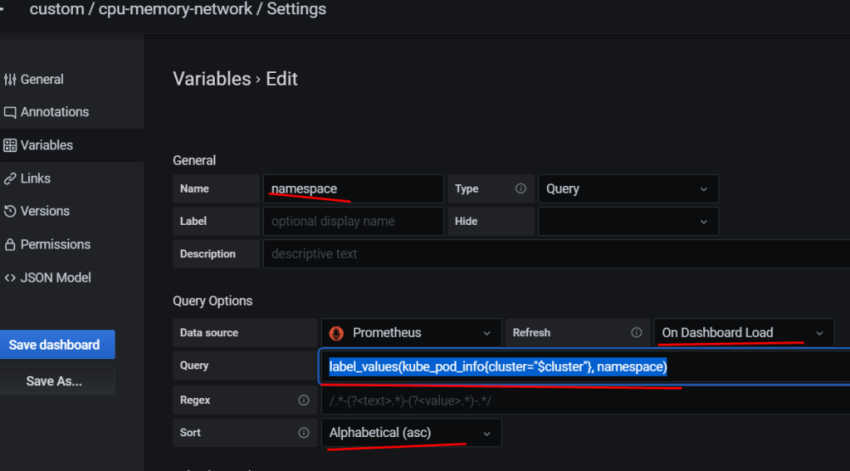

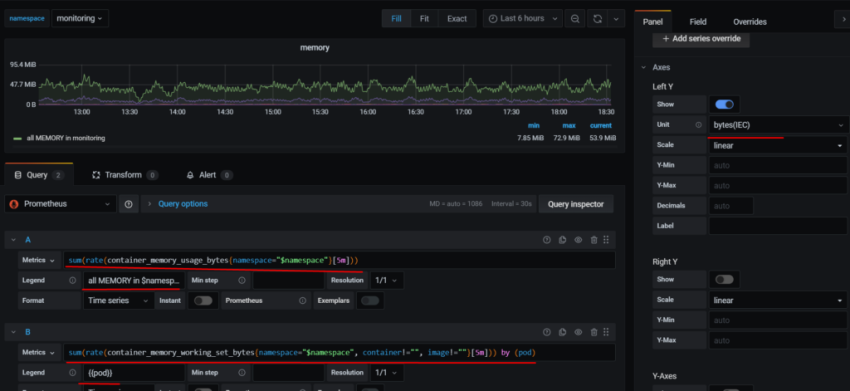

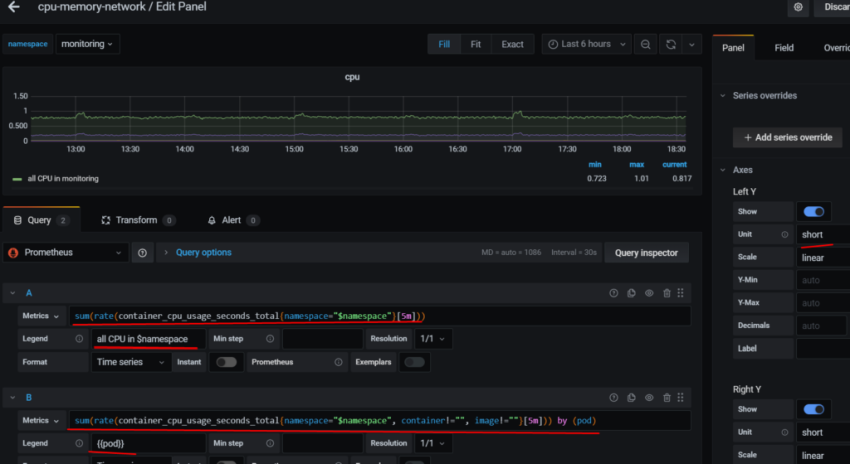

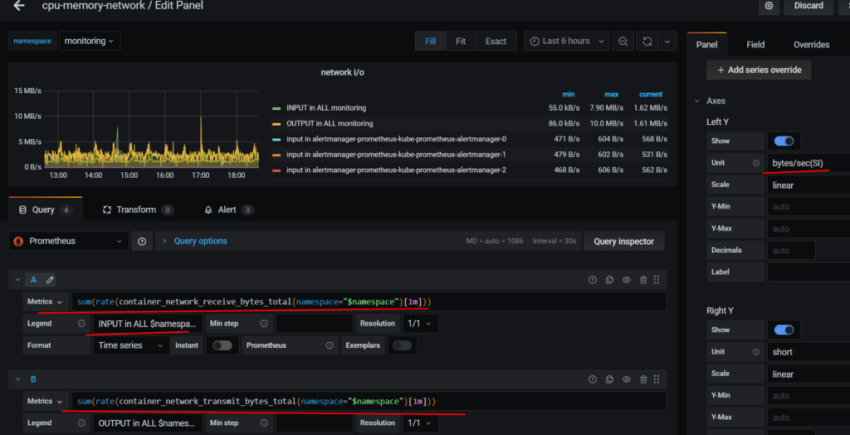

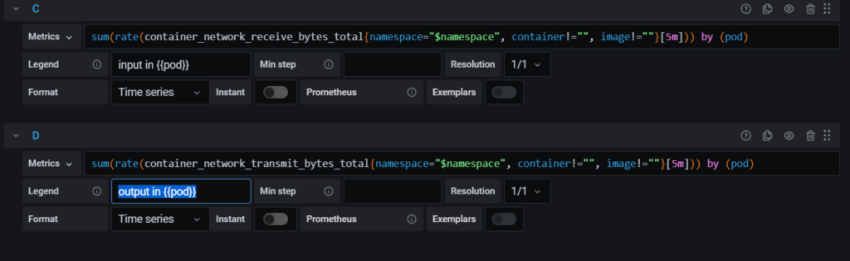

10. Настройка графиков в grafana

git clone https://github.com/prometheus-community/helm-charts.git

cd helm-charts/charts/kube-prometheus-stack/

докачиваем чарты:

helm dep update

создаём namescpase в котором будет всё крутиться:

kubectl create ns monitoring

теперь рассмотрим что правим в переменных у helm chart:

[root@prod-vsrv-kubemaster1 charts]# vim kube-prometheus-stack/values.yaml

namespaceOverride: «monitoring»

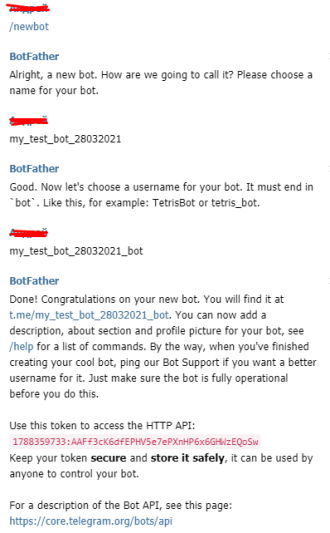

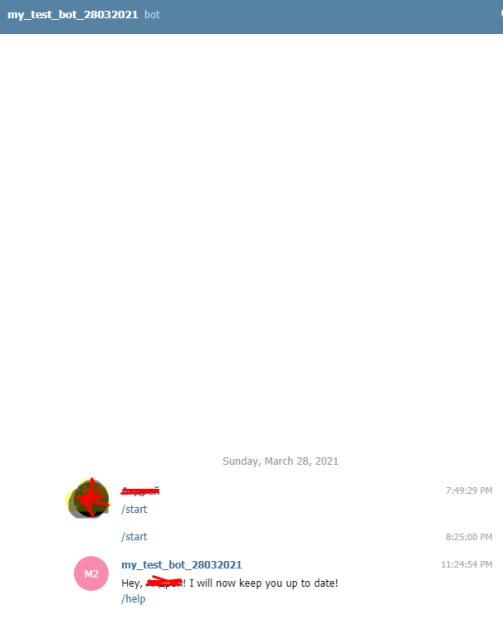

для работы telegram бота:

## Alertmanager configuration directives

## ref: https://prometheus.io/docs/alerting/configuration/#configuration-file

## https://prometheus.io/webtools/alerting/routing-tree-editor/

##

config:

global:

resolve_timeout: 5m

route:

receiver: 'telegram'

routes:

- match:

severity: critical

repeat_interval: 48h

continue: true

receiver: 'telegram'

- match:

alertname: Watchdog

repeat_interval: 48h

continue: true

receiver: 'telegram'

receivers:

- name: 'telegram'

webhook_configs:

- send_resolved: true

url: 'http://alertmanager-bot:8080'

templates:

- '/etc/alertmanager/config/*.tmpl'

настраиваем ingress у alertmanager:

ingress:

enabled: true

hosts:

- alertmanager.prod.test.local

paths:

- /

настраиваем volume для Alertmanager отмечу что в кластере настроен nfs-provisioner — nfs-storageclass

ingress:

enabled: true

hosts:

- alertmanager.prod.test.local

paths:

- /

теперь настроим grafana

тут указываем ingress а также добавляем хранение dashboard в nfs storage-class

grafana:

enabled: true

namespaceOverride: "monitoring"

## Deploy default dashboards.

##

defaultDashboardsEnabled: true

adminPassword: prom-operator

ingress:

## If true, Grafana Ingress will be created

##

enabled: true

labels: {}

## Hostnames.

## Must be provided if Ingress is enable.

##

hosts:

- grafana.prod.test.local

#hosts: []

## Path for grafana ingress

path: /

## TLS configuration for grafana Ingress

## Secret must be manually created in the namespace

##

tls: []

# - secretName: grafana-general-tls

# hosts:

# - grafana.example.com

persistence:

type: pvc

enabled: true

storageClassName: nfs-storageclass

accessModes:

- ReadWriteMany

size: 5Gi

# annotations: {}

finalizers:

- kubernetes.io/pvc-protection

## If using kubeControllerManager.endpoints only the port and targetPort are used

##

service:

port: 10252

targetPort: 10252

selector:

k8s-app: kube-controller-manager

# component: kube-controller-manager

## If using kubeScheduler.endpoints only the port and targetPort are used

##

service:

port: 10251

targetPort: 10251

selector:

k8s-app: kube-scheduler

# component: kube-scheduler

## Configuration for kube-state-metrics subchart

##

kube-state-metrics:

namespaceOverride: "monitoring"

rbac:

create: true

podSecurityPolicy:

enabled: true

## Configuration for prometheus-node-exporter subchart

##

prometheus-node-exporter:

namespaceOverride: "monitoring"

теперь настраиваем ingress для prometheus

ingress:

enabled: true

annotations: {}

labels: {}

## Hostnames.

## Must be provided if Ingress is enabled.

##

hosts:

- prometheus.prod.test.local

## Paths to use for ingress rules -

##

paths:

- /

а так же volume:

## Prometheus StorageSpec for persistent data

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/user-guides/storage.md

##

storageSpec:

volumeClaimTemplate:

spec:

storageClassName: nfs-storageclass

accessModes: ["ReadWriteMany"]

resources:

requests:

storage: 10Gi

и теперь важная фишка, добавление label который надо будет добавить на все неймспейсы:

## Namespaces to be selected for ServiceMonitor discovery.

##

serviceMonitorNamespaceSelector:

matchLabels:

prometheus: enabled

## Log level for Alertmanager to be configured with.

##

logLevel: info

## Size is the expected size of the alertmanager cluster. The controller will eventually make the size of the

## running cluster equal to the expected size.

replicas: 3

также правим:

## Enable scraping /metrics/resource from kubelet's service

## This is disabled by default because container metrics are already exposed by cAdvisor

##

resource: true

для выставления срока хранения данных можем поменять следующее значение:

## Time duration Alertmanager shall retain data for. Default is '120h', and must match the regular expression

## [0-9]+(ms|s|m|h) (milliseconds seconds minutes hours).

##

retention: 120h

запускаем теперь helm chart

[root@prod-vsrv-kubemaster1 charts]# helm upgrade —install -name prometheus kube-prometheus-stack/ -f kube-prometheus-stack/values.yaml —namespace monitoring

Release "prometheus" does not exist. Installing it now.

NAME: prometheus

LAST DEPLOYED: Thu Mar 4 13:25:07 2021

NAMESPACE: monitoring

STATUS: deployed

REVISION: 1

NOTES:

kube-prometheus-stack has been installed. Check its status by running:

kubectl --namespace monitoring get pods -l "release=prometheus"

Visit https://github.com/prometheus-operator/kube-prometheus for instructions on how to create & configure Alertmanager and Prometheus instances using the Operator.

видим что при запуске добавился label release=prometheus — проверяем:

kubectl describe pod prometheus-kube-prometheus-operator-659d5f8674-qxrf5 -n monitoring | grep -i release

release=prometheus

смотрим label на всех неймсмейсах:

kubectl get ns —show-labels

NAME STATUS AGE LABELS

default Active 192d <none>

elk Active 63d <none>

ingress-nginx Active 192d name=ingress-nginx

keda Active 86d <none>

kube-node-lease Active 192d <none>

kube-public Active 192d <none>

kube-system Active 192d name=kube-system

m-logstash-megabuilder Active 12d <none>

monitoring Active 3h15m <none>

terminal-soft Active 176d <none>

проставим на них label release=prometheus

kubectl label namespace —all «prometheus=enabled»

проверяем:

kubectl get ns —show-labels

NAME STATUS AGE LABELS

default Active 192d prometheus=enabled

elk Active 63d prometheus=enabled

ingress-nginx Active 192d name=ingress-nginx,prometheus=enabled

keda Active 86d prometheus=enabled

kube-node-lease Active 192d prometheus=enabled

kube-public Active 192d prometheus=enabled

kube-system Active 192d name=kube-system,prometheus=enabled

m-logstash-megabuilder Active 12d prometheus=enabled

monitoring Active 3h16m prometheus=enabled

terminal-soft Active 176d prometheus=enabled

теперь настроим сбор метрик с ingress controller,

создаём сервис для ingress. Указываем namespace в котором работает ingress, так же необходим label app.kubernetes.io/name: ingress-nginx данный лейб смотрим так:

kubectl describe pod -n ingress-nginx ingress-nginx-controller-vqjkl | grep -A3 Labels

Labels: app.kubernetes.io/name=ingress-nginx

app.kubernetes.io/part-of=ingress-nginx

controller-revision-hash=bd6d56f49

pod-template-generation=1

mkdir exporter-ingres

cat exporter-ingres/service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

release: prometheus

name: ingress-nginx

namespace: ingress-nginx

spec:

ports:

- name: http

port: 80

protocol: TCP

targetPort: 80

- name: https

port: 443

protocol: TCP

targetPort: 443

- name: prometheus

port: 10254

protocol: TCP

targetPort: 10254

selector:

app.kubernetes.io/name: ingress-nginx

В данном файле так же обращаем внимание на:

name: prometheus

на это имя будет натравлен port у ServiceMonitor

теперь создаём ServiceMonitor, он будет создавать в prometheus target с метриками ingress controller:

cat exporter-ingres/service-monitor.yaml

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

labels:

app: ingress-nginx

release: prometheus

name: ingress-nginx

namespace: monitoring

spec:

endpoints:

- honorLabels: true

interval: 10s

path: /metrics

port: prometheus

scheme: http

scrapeTimeout: 10s

namespaceSelector:

any: true

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

release: prometheus

также правим:

## Enable scraping /metrics/resource from kubelet's service

## This is disabled by default because container metrics are already exposed by cAdvisor

##

resource: true

применяем:

[root@prod-vsrv-kubemaster1 charts]# kubectl apply -f exporter-ingres/service.yaml -f exporter-ingres/service-monitor.yaml

через пару минуток проверяем в prometheus

общий вид у файла values.yaml будет следующий:

cat helm-charts/charts/kube-prometheus-stack/values.yaml

# Default values for kube-prometheus-stack.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

## Provide a name in place of kube-prometheus-stack for `app:` labels

##

nameOverride: ""

## Override the deployment namespace

##

namespaceOverride: "monitoring"

## Provide a k8s version to auto dashboard import script example: kubeTargetVersionOverride: 1.16.6

##

kubeTargetVersionOverride: ""

## Provide a name to substitute for the full names of resources

##

fullnameOverride: ""

## Labels to apply to all resources

##

commonLabels: {}

# scmhash: abc123

# myLabel: aakkmd

## Create default rules for monitoring the cluster

##

defaultRules:

create: true

rules:

alertmanager: true

etcd: true

general: true

k8s: true

kubeApiserver: true

kubeApiserverAvailability: true

kubeApiserverError: true

kubeApiserverSlos: true

kubelet: true

kubePrometheusGeneral: true

kubePrometheusNodeAlerting: true

kubePrometheusNodeRecording: true

kubernetesAbsent: true

kubernetesApps: true

kubernetesResources: true

kubernetesStorage: true

kubernetesSystem: true

kubeScheduler: true

kubeStateMetrics: true

network: true

node: true

prometheus: true

prometheusOperator: true

time: true

## Runbook url prefix for default rules

runbookUrl: https://github.com/kubernetes-monitoring/kubernetes-mixin/tree/master/runbook.md#

## Reduce app namespace alert scope

appNamespacesTarget: ".*"

## Labels for default rules

labels: {}

## Annotations for default rules

annotations: {}

## Additional labels for PrometheusRule alerts

additionalRuleLabels: {}

## Deprecated way to provide custom recording or alerting rules to be deployed into the cluster.

##

# additionalPrometheusRules: []

# - name: my-rule-file

# groups:

# - name: my_group

# rules:

# - record: my_record

# expr: 100 * my_record

## Provide custom recording or alerting rules to be deployed into the cluster.

##

additionalPrometheusRulesMap: {}

# rule-name:

# groups:

# - name: my_group

# rules:

# - record: my_record

# expr: 100 * my_record

##

global:

rbac:

create: true

pspEnabled: true

pspAnnotations: {}

## Specify pod annotations

## Ref: https://kubernetes.io/docs/concepts/policy/pod-security-policy/#apparmor

## Ref: https://kubernetes.io/docs/concepts/policy/pod-security-policy/#seccomp

## Ref: https://kubernetes.io/docs/concepts/policy/pod-security-policy/#sysctl

##

# seccomp.security.alpha.kubernetes.io/allowedProfileNames: '*'

# seccomp.security.alpha.kubernetes.io/defaultProfileName: 'docker/default'

# apparmor.security.beta.kubernetes.io/defaultProfileName: 'runtime/default'

## Reference to one or more secrets to be used when pulling images

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

##

imagePullSecrets: []

# - name: "image-pull-secret"

## Configuration for alertmanager

## ref: https://prometheus.io/docs/alerting/alertmanager/

##

alertmanager:

## Deploy alertmanager

##

enabled: true

## Api that prometheus will use to communicate with alertmanager. Possible values are v1, v2

##

apiVersion: v2

## Service account for Alertmanager to use.

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/

##

serviceAccount:

create: true

name: ""

annotations: {}

## Configure pod disruption budgets for Alertmanager

## ref: https://kubernetes.io/docs/tasks/run-application/configure-pdb/#specifying-a-poddisruptionbudget

## This configuration is immutable once created and will require the PDB to be deleted to be changed

## https://github.com/kubernetes/kubernetes/issues/45398

##

podDisruptionBudget:

enabled: false

minAvailable: 1

maxUnavailable: ""

## Alertmanager configuration directives

## ref: https://prometheus.io/docs/alerting/configuration/#configuration-file

## https://prometheus.io/webtools/alerting/routing-tree-editor/

##

config:

global:

resolve_timeout: 5m

route:

receiver: 'telegram'

routes:

- match:

severity: critical

repeat_interval: 48h

continue: true

receiver: 'telegram'

- match:

alertname: Watchdog

repeat_interval: 48h

continue: true

receiver: 'telegram'

receivers:

- name: 'telegram'

webhook_configs:

- send_resolved: true

url: 'http://alertmanager-bot:8080'

# config:

# global:

# resolve_timeout: 5m

# route:

# group_by: ['job']

# group_wait: 30s

# group_interval: 5m

# repeat_interval: 12h

# receiver: 'null'

# routes:

# - match:

# alertname: Watchdog

# receiver: 'null'

# receivers:

# - name: 'null'

templates:

- '/etc/alertmanager/config/*.tmpl'

## Pass the Alertmanager configuration directives through Helm's templating

## engine. If the Alertmanager configuration contains Alertmanager templates,

## they'll need to be properly escaped so that they are not interpreted by

## Helm

## ref: https://helm.sh/docs/developing_charts/#using-the-tpl-function

## https://prometheus.io/docs/alerting/configuration/#tmpl_string

## https://prometheus.io/docs/alerting/notifications/

## https://prometheus.io/docs/alerting/notification_examples/

tplConfig: false

## Alertmanager template files to format alerts

## By default, templateFiles are placed in /etc/alertmanager/config/ and if

## they have a .tmpl file suffix will be loaded. See config.templates above

## to change, add other suffixes. If adding other suffixes, be sure to update

## config.templates above to include those suffixes.

## ref: https://prometheus.io/docs/alerting/notifications/

## https://prometheus.io/docs/alerting/notification_examples/

##

templateFiles: {}

#

## An example template:

# template_1.tmpl: |-

# {{ define "cluster" }}{{ .ExternalURL | reReplaceAll ".*alertmanager\.(.*)" "$1" }}{{ end }}

#

# {{ define "slack.myorg.text" }}

# {{- $root := . -}}

# {{ range .Alerts }}

# *Alert:* {{ .Annotations.summary }} - `{{ .Labels.severity }}`

# *Cluster:* {{ template "cluster" $root }}

# *Description:* {{ .Annotations.description }}

# *Graph:* <{{ .GeneratorURL }}|:chart_with_upwards_trend:>

# *Runbook:* <{{ .Annotations.runbook }}|:spiral_note_pad:>

# *Details:*

# {{ range .Labels.SortedPairs }} • *{{ .Name }}:* `{{ .Value }}`

# {{ end }}

# {{ end }}

# {{ end }}

ingress:

enabled: true

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

annotations: {}

labels: {}

## Hosts must be provided if Ingress is enabled.

##

hosts:

- alertmanager.prod.test.local

## Paths to use for ingress rules - one path should match the alertmanagerSpec.routePrefix

##

paths:

- /

## For Kubernetes >= 1.18 you should specify the pathType (determines how Ingress paths should be matched)

## See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#better-path-matching-with-path-types

# pathType: ImplementationSpecific

## TLS configuration for Alertmanager Ingress

## Secret must be manually created in the namespace

##

tls: []

# - secretName: alertmanager-general-tls

# hosts:

# - alertmanager.example.com

## Configuration for Alertmanager secret

##

secret:

annotations: {}

## Configuration for creating an Ingress that will map to each Alertmanager replica service

## alertmanager.servicePerReplica must be enabled

##

ingressPerReplica:

enabled: false

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

annotations: {}

labels: {}

## Final form of the hostname for each per replica ingress is

## {{ ingressPerReplica.hostPrefix }}-{{ $replicaNumber }}.{{ ingressPerReplica.hostDomain }}

##

## Prefix for the per replica ingress that will have `-$replicaNumber`

## appended to the end

hostPrefix: ""

## Domain that will be used for the per replica ingress

hostDomain: ""

## Paths to use for ingress rules

##

paths: []

# - /

## For Kubernetes >= 1.18 you should specify the pathType (determines how Ingress paths should be matched)

## See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#better-path-matching-with-path-types

# pathType: ImplementationSpecific

## Secret name containing the TLS certificate for alertmanager per replica ingress

## Secret must be manually created in the namespace

tlsSecretName: ""

## Separated secret for each per replica Ingress. Can be used together with cert-manager

##

tlsSecretPerReplica:

enabled: false

## Final form of the secret for each per replica ingress is

## {{ tlsSecretPerReplica.prefix }}-{{ $replicaNumber }}

##

prefix: "alertmanager"

## Configuration for Alertmanager service

##

service:

annotations: {}

labels: {}

clusterIP: ""

## Port for Alertmanager Service to listen on

##

port: 9093

## To be used with a proxy extraContainer port

##

targetPort: 9093

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30903

## List of IP addresses at which the Prometheus server service is available

## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips

##

## Additional ports to open for Alertmanager service

additionalPorts: []

externalIPs: []

loadBalancerIP: ""

loadBalancerSourceRanges: []

## Service type

##

type: ClusterIP

## Configuration for creating a separate Service for each statefulset Alertmanager replica

##

servicePerReplica:

enabled: false

annotations: {}

## Port for Alertmanager Service per replica to listen on

##

port: 9093

## To be used with a proxy extraContainer port

targetPort: 9093

## Port to expose on each node

## Only used if servicePerReplica.type is 'NodePort'

##

nodePort: 30904

## Loadbalancer source IP ranges

## Only used if servicePerReplica.type is "loadbalancer"

loadBalancerSourceRanges: []

## Service type

##

type: ClusterIP

## If true, create a serviceMonitor for alertmanager

##

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

selfMonitor: true

## scheme: HTTP scheme to use for scraping. Can be used with `tlsConfig` for example if using istio mTLS.

scheme: ""

## tlsConfig: TLS configuration to use when scraping the endpoint. For example if using istio mTLS.

## Of type: https://github.com/coreos/prometheus-operator/blob/master/Documentation/api.md#tlsconfig

tlsConfig: {}

bearerTokenFile:

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Settings affecting alertmanagerSpec

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#alertmanagerspec

##

alertmanagerSpec:

## Standard object’s metadata. More info: https://github.com/kubernetes/community/blob/master/contributors/devel/sig-architecture/api-conventions.md#metadata

## Metadata Labels and Annotations gets propagated to the Alertmanager pods.

##

podMetadata: {}

## Image of Alertmanager

##

image:

repository: quay.io/prometheus/alertmanager

tag: v0.21.0

sha: ""

## If true then the user will be responsible to provide a secret with alertmanager configuration

## So when true the config part will be ignored (including templateFiles) and the one in the secret will be used

##

useExistingSecret: false

## Secrets is a list of Secrets in the same namespace as the Alertmanager object, which shall be mounted into the

## Alertmanager Pods. The Secrets are mounted into /etc/alertmanager/secrets/.

##

secrets: []

## ConfigMaps is a list of ConfigMaps in the same namespace as the Alertmanager object, which shall be mounted into the Alertmanager Pods.

## The ConfigMaps are mounted into /etc/alertmanager/configmaps/.

##

configMaps: []

## ConfigSecret is the name of a Kubernetes Secret in the same namespace as the Alertmanager object, which contains configuration for

## this Alertmanager instance. Defaults to 'alertmanager-' The secret is mounted into /etc/alertmanager/config.

##

# configSecret:

## AlertmanagerConfigs to be selected to merge and configure Alertmanager with.

##

alertmanagerConfigSelector: {}

## Example which selects all alertmanagerConfig resources

## with label "alertconfig" with values any of "example-config" or "example-config-2"

# alertmanagerConfigSelector:

# matchExpressions:

# - key: alertconfig

# operator: In

# values:

# - example-config

# - example-config-2

#

## Example which selects all alertmanagerConfig resources with label "role" set to "example-config"

# alertmanagerConfigSelector:

# matchLabels:

# role: example-config

## Namespaces to be selected for AlertmanagerConfig discovery. If nil, only check own namespace.

##

alertmanagerConfigNamespaceSelector: {}

## Example which selects all namespaces

## with label "alertmanagerconfig" with values any of "example-namespace" or "example-namespace-2"

# alertmanagerConfigNamespaceSelector:

# matchExpressions:

# - key: alertmanagerconfig

# operator: In

# values:

# - example-namespace

# - example-namespace-2

## Example which selects all namespaces with label "alertmanagerconfig" set to "enabled"

# alertmanagerConfigNamespaceSelector:

# matchLabels:

# alertmanagerconfig: enabled

## Define Log Format

# Use logfmt (default) or json logging

logFormat: logfmt

## Log level for Alertmanager to be configured with.

##

logLevel: info

## Size is the expected size of the alertmanager cluster. The controller will eventually make the size of the

## running cluster equal to the expected size.

replicas: 3

## Time duration Alertmanager shall retain data for. Default is '120h', and must match the regular expression

## [0-9]+(ms|s|m|h) (milliseconds seconds minutes hours).

##

retention: 120h

## Storage is the definition of how storage will be used by the Alertmanager instances.

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/user-guides/storage.md

##

storage:

volumeClaimTemplate:

spec:

storageClassName: nfs-storageclass

accessModes: ["ReadWriteMany"]

resources:

requests:

storage: 10Gi

# selector: {}

## The external URL the Alertmanager instances will be available under. This is necessary to generate correct URLs. This is necessary if Alertmanager is not served from root of a DNS name. string false

##

externalUrl:

## The route prefix Alertmanager registers HTTP handlers for. This is useful, if using ExternalURL and a proxy is rewriting HTTP routes of a request, and the actual ExternalURL is still true,

## but the server serves requests under a different route prefix. For example for use with kubectl proxy.

##

routePrefix: /

## If set to true all actions on the underlying managed objects are not going to be performed, except for delete actions.

##

paused: false

## Define which Nodes the Pods are scheduled on.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

## Define resources requests and limits for single Pods.

## ref: https://kubernetes.io/docs/user-guide/compute-resources/

##

resources: {}

# requests:

# memory: 400Mi

## Pod anti-affinity can prevent the scheduler from placing Prometheus replicas on the same node.

## The default value "soft" means that the scheduler should *prefer* to not schedule two replica pods onto the same node but no guarantee is provided.

## The value "hard" means that the scheduler is *required* to not schedule two replica pods onto the same node.

## The value "" will disable pod anti-affinity so that no anti-affinity rules will be configured.

##

podAntiAffinity: ""

## If anti-affinity is enabled sets the topologyKey to use for anti-affinity.

## This can be changed to, for example, failure-domain.beta.kubernetes.io/zone

##

podAntiAffinityTopologyKey: kubernetes.io/hostname

## Assign custom affinity rules to the alertmanager instance

## ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/

##

affinity: {}

# nodeAffinity:

# requiredDuringSchedulingIgnoredDuringExecution:

# nodeSelectorTerms:

# - matchExpressions:

# - key: kubernetes.io/e2e-az-name

# operator: In

# values:

# - e2e-az1

# - e2e-az2

## If specified, the pod's tolerations.

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

# - key: "key"

# operator: "Equal"

# value: "value"

# effect: "NoSchedule"

## If specified, the pod's topology spread constraints.

## ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-topology-spread-constraints/

##

topologySpreadConstraints: []

# - maxSkew: 1

# topologyKey: topology.kubernetes.io/zone

# whenUnsatisfiable: DoNotSchedule

# labelSelector:

# matchLabels:

# app: alertmanager

## SecurityContext holds pod-level security attributes and common container settings.

## This defaults to non root user with uid 1000 and gid 2000. *v1.PodSecurityContext false

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/

##

securityContext:

runAsGroup: 2000

runAsNonRoot: true

runAsUser: 1000

fsGroup: 2000

## ListenLocal makes the Alertmanager server listen on loopback, so that it does not bind against the Pod IP.

## Note this is only for the Alertmanager UI, not the gossip communication.

##

listenLocal: false

## Containers allows injecting additional containers. This is meant to allow adding an authentication proxy to an Alertmanager pod.

##

containers: []

# Additional volumes on the output StatefulSet definition.

volumes: []

# Additional VolumeMounts on the output StatefulSet definition.

volumeMounts: []

## InitContainers allows injecting additional initContainers. This is meant to allow doing some changes

## (permissions, dir tree) on mounted volumes before starting prometheus

initContainers: []

## Priority class assigned to the Pods

##

priorityClassName: ""

## AdditionalPeers allows injecting a set of additional Alertmanagers to peer with to form a highly available cluster.

##

additionalPeers: []

## PortName to use for Alert Manager.

##

portName: "web"

## ClusterAdvertiseAddress is the explicit address to advertise in cluster. Needs to be provided for non RFC1918 [1] (public) addresses. [1] RFC1918: https://tools.ietf.org/html/rfc1918

##

clusterAdvertiseAddress: false

## ForceEnableClusterMode ensures Alertmanager does not deactivate the cluster mode when running with a single replica.

## Use case is e.g. spanning an Alertmanager cluster across Kubernetes clusters with a single replica in each.

forceEnableClusterMode: false

## Using default values from https://github.com/grafana/helm-charts/blob/main/charts/grafana/values.yaml

##

grafana:

enabled: true

namespaceOverride: "monitoring"

## Deploy default dashboards.

##

defaultDashboardsEnabled: true

adminPassword: prom-operator

ingress:

## If true, Grafana Ingress will be created

##

enabled: true

## Annotations for Grafana Ingress

##

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

## Labels to be added to the Ingress

##

labels: {}

## Hostnames.

## Must be provided if Ingress is enable.

##

hosts:

- grafana.prod.test.local

#hosts: []

## Path for grafana ingress

path: /

## TLS configuration for grafana Ingress

## Secret must be manually created in the namespace

##

tls: []

# - secretName: grafana-general-tls

# hosts:

# - grafana.example.com

sidecar:

dashboards:

enabled: true

label: grafana_dashboard

## Annotations for Grafana dashboard configmaps

##

annotations: {}

multicluster: false

datasources:

enabled: true

defaultDatasourceEnabled: true

# If not defined, will use prometheus.prometheusSpec.scrapeInterval or its default

# defaultDatasourceScrapeInterval: 15s

## Annotations for Grafana datasource configmaps

##

annotations: {}

## Create datasource for each Pod of Prometheus StatefulSet;

## this uses headless service `prometheus-operated` which is

## created by Prometheus Operator

## ref: https://git.io/fjaBS

createPrometheusReplicasDatasources: false

label: grafana_datasource

extraConfigmapMounts: []

# - name: certs-configmap

# mountPath: /etc/grafana/ssl/

# configMap: certs-configmap

# readOnly: true

## Configure additional grafana datasources (passed through tpl)

## ref: http://docs.grafana.org/administration/provisioning/#datasources

additionalDataSources: []

# - name: prometheus-sample

# access: proxy

# basicAuth: true

# basicAuthPassword: pass

# basicAuthUser: daco

# editable: false

# jsonData:

# tlsSkipVerify: true

# orgId: 1

# type: prometheus

# url: https://{{ printf "%s-prometheus.svc" .Release.Name }}:9090

# version: 1

## Passed to grafana subchart and used by servicemonitor below

##

service:

portName: service

## If true, create a serviceMonitor for grafana

##

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

selfMonitor: true

# Path to use for scraping metrics. Might be different if server.root_url is set

# in grafana.ini

path: "/metrics"

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping the kube api server

##

kubeApiServer:

enabled: true

tlsConfig:

serverName: kubernetes

insecureSkipVerify: false

## If your API endpoint address is not reachable (as in AKS) you can replace it with the kubernetes service

##

relabelings: []

# - sourceLabels:

# - __meta_kubernetes_namespace

# - __meta_kubernetes_service_name

# - __meta_kubernetes_endpoint_port_name

# action: keep

# regex: default;kubernetes;https

# - targetLabel: __address__

# replacement: kubernetes.default.svc:443

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

jobLabel: component

selector:

matchLabels:

component: apiserver

provider: kubernetes

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

## Component scraping the kubelet and kubelet-hosted cAdvisor

##

kubelet:

enabled: true

namespace: kube-system

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Enable scraping the kubelet over https. For requirements to enable this see

## https://github.com/prometheus-operator/prometheus-operator/issues/926

##

https: true

## Enable scraping /metrics/cadvisor from kubelet's service

##

cAdvisor: true

## Enable scraping /metrics/probes from kubelet's service

##

probes: true

## Enable scraping /metrics/resource from kubelet's service

## This is disabled by default because container metrics are already exposed by cAdvisor

##

resource: true

# From kubernetes 1.18, /metrics/resource/v1alpha1 renamed to /metrics/resource

resourcePath: "/metrics/resource/v1alpha1"

## Metric relabellings to apply to samples before ingestion

##

cAdvisorMetricRelabelings: []

# - sourceLabels: [__name__, image]

# separator: ;

# regex: container_([a-z_]+);

# replacement: $1

# action: drop

# - sourceLabels: [__name__]

# separator: ;

# regex: container_(network_tcp_usage_total|network_udp_usage_total|tasks_state|cpu_load_average_10s)

# replacement: $1

# action: drop

## Metric relabellings to apply to samples before ingestion

##

probesMetricRelabelings: []

# - sourceLabels: [__name__, image]

# separator: ;

# regex: container_([a-z_]+);

# replacement: $1

# action: drop

# - sourceLabels: [__name__]

# separator: ;

# regex: container_(network_tcp_usage_total|network_udp_usage_total|tasks_state|cpu_load_average_10s)

# replacement: $1

# action: drop

# relabel configs to apply to samples before ingestion.

# metrics_path is required to match upstream rules and charts

##

cAdvisorRelabelings:

- sourceLabels: [__metrics_path__]

targetLabel: metrics_path

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

probesRelabelings:

- sourceLabels: [__metrics_path__]

targetLabel: metrics_path

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

resourceRelabelings:

- sourceLabels: [__metrics_path__]

targetLabel: metrics_path

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

metricRelabelings: []

# - sourceLabels: [__name__, image]

# separator: ;

# regex: container_([a-z_]+);

# replacement: $1

# action: drop

# - sourceLabels: [__name__]

# separator: ;

# regex: container_(network_tcp_usage_total|network_udp_usage_total|tasks_state|cpu_load_average_10s)

# replacement: $1

# action: drop

# relabel configs to apply to samples before ingestion.

# metrics_path is required to match upstream rules and charts

##

relabelings:

- sourceLabels: [__metrics_path__]

targetLabel: metrics_path

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping the kube controller manager

##

kubeControllerManager:

enabled: true

## If your kube controller manager is not deployed as a pod, specify IPs it can be found on

##

endpoints: []

# - 10.141.4.22

# - 10.141.4.23

# - 10.141.4.24

## If using kubeControllerManager.endpoints only the port and targetPort are used

##

service:

port: 10252

targetPort: 10252

selector:

k8s-app: kube-controller-manager

# component: kube-controller-manager

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Enable scraping kube-controller-manager over https.

## Requires proper certs (not self-signed) and delegated authentication/authorization checks

##

https: false

# Skip TLS certificate validation when scraping

insecureSkipVerify: null

# Name of the server to use when validating TLS certificate

serverName: null

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping coreDns. Use either this or kubeDns

##

coreDns:

enabled: true

service:

port: 9153

targetPort: 9153

# selector:

# k8s-app: kube-dns

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping kubeDns. Use either this or coreDns

##

kubeDns:

enabled: false

service:

dnsmasq:

port: 10054

targetPort: 10054

skydns:

port: 10055

targetPort: 10055

# selector:

# k8s-app: kube-dns

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

dnsmasqMetricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

dnsmasqRelabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping etcd

##

kubeEtcd:

enabled: true

## If your etcd is not deployed as a pod, specify IPs it can be found on

##

endpoints: []

# - 10.141.4.22

# - 10.141.4.23

# - 10.141.4.24

## Etcd service. If using kubeEtcd.endpoints only the port and targetPort are used

##

service:

port: 2379

targetPort: 2379

# selector:

# component: etcd

## Configure secure access to the etcd cluster by loading a secret into prometheus and

## specifying security configuration below. For example, with a secret named etcd-client-cert

##

## serviceMonitor:

## scheme: https

## insecureSkipVerify: false

## serverName: localhost

## caFile: /etc/prometheus/secrets/etcd-client-cert/etcd-ca

## certFile: /etc/prometheus/secrets/etcd-client-cert/etcd-client

## keyFile: /etc/prometheus/secrets/etcd-client-cert/etcd-client-key

##

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

scheme: http

insecureSkipVerify: false

serverName: ""

caFile: ""

certFile: ""

keyFile: ""

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping kube scheduler

##

kubeScheduler:

enabled: true

## If your kube scheduler is not deployed as a pod, specify IPs it can be found on

##

endpoints: []

# - 10.141.4.22

# - 10.141.4.23

# - 10.141.4.24

## If using kubeScheduler.endpoints only the port and targetPort are used

##

service:

port: 10251

targetPort: 10251

selector:

k8s-app: kube-scheduler

# component: kube-scheduler

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Enable scraping kube-scheduler over https.

## Requires proper certs (not self-signed) and delegated authentication/authorization checks

##

https: false

## Skip TLS certificate validation when scraping

insecureSkipVerify: null

## Name of the server to use when validating TLS certificate

serverName: null

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Component scraping kube proxy

##

kubeProxy:

enabled: true

## If your kube proxy is not deployed as a pod, specify IPs it can be found on

##

endpoints: []

# - 10.141.4.22

# - 10.141.4.23

# - 10.141.4.24

service:

port: 10249

targetPort: 10249

# selector:

# k8s-app: kube-proxy

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Enable scraping kube-proxy over https.

## Requires proper certs (not self-signed) and delegated authentication/authorization checks

##

https: false

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

## Component scraping kube state metrics

##

kubeStateMetrics:

enabled: true

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Override serviceMonitor selector

##

selectorOverride: {}

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Configuration for kube-state-metrics subchart

##

kube-state-metrics:

namespaceOverride: "monitoring"

rbac:

create: true

podSecurityPolicy:

enabled: true

## Deploy node exporter as a daemonset to all nodes

##

nodeExporter:

enabled: true

## Use the value configured in prometheus-node-exporter.podLabels

##

jobLabel: jobLabel

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## How long until a scrape request times out. If not set, the Prometheus default scape timeout is used.

##

scrapeTimeout: ""

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - sourceLabels: [__name__]

# separator: ;

# regex: ^node_mountstats_nfs_(event|operations|transport)_.+

# replacement: $1

# action: drop

## relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Configuration for prometheus-node-exporter subchart

##

prometheus-node-exporter:

namespaceOverride: "monitoring"

podLabels:

## Add the 'node-exporter' label to be used by serviceMonitor to match standard common usage in rules and grafana dashboards

##

jobLabel: node-exporter

extraArgs:

- --collector.filesystem.ignored-mount-points=^/(dev|proc|sys|var/lib/docker/.+|var/lib/kubelet/.+)($|/)

- --collector.filesystem.ignored-fs-types=^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$

## Manages Prometheus and Alertmanager components

##

prometheusOperator:

enabled: true

## Prometheus-Operator v0.39.0 and later support TLS natively.

##

tls:

enabled: true

# Value must match version names from https://golang.org/pkg/crypto/tls/#pkg-constants

tlsMinVersion: VersionTLS13

# The default webhook port is 10250 in order to work out-of-the-box in GKE private clusters and avoid adding firewall rules.

internalPort: 10250

## Admission webhook support for PrometheusRules resources added in Prometheus Operator 0.30 can be enabled to prevent incorrectly formatted

## rules from making their way into prometheus and potentially preventing the container from starting

admissionWebhooks:

failurePolicy: Fail

enabled: true

## A PEM encoded CA bundle which will be used to validate the webhook's server certificate.

## If unspecified, system trust roots on the apiserver are used.

caBundle: ""

## If enabled, generate a self-signed certificate, then patch the webhook configurations with the generated data.

## On chart upgrades (or if the secret exists) the cert will not be re-generated. You can use this to provide your own

## certs ahead of time if you wish.

##

patch:

enabled: true

image:

repository: jettech/kube-webhook-certgen

tag: v1.5.0

sha: ""

pullPolicy: IfNotPresent

resources: {}

## Provide a priority class name to the webhook patching job

##

priorityClassName: ""

podAnnotations: {}

nodeSelector: {}

affinity: {}

tolerations: []

# Use certmanager to generate webhook certs

certManager:

enabled: false

# issuerRef:

# name: "issuer"

# kind: "ClusterIssuer"

## Namespaces to scope the interaction of the Prometheus Operator and the apiserver (allow list).

## This is mutually exclusive with denyNamespaces. Setting this to an empty object will disable the configuration

##

namespaces: {}

# releaseNamespace: true

# additional:

# - kube-system

## Namespaces not to scope the interaction of the Prometheus Operator (deny list).

##

denyNamespaces: []

## Filter namespaces to look for prometheus-operator custom resources

##

alertmanagerInstanceNamespaces: []

prometheusInstanceNamespaces: []

thanosRulerInstanceNamespaces: []

## The clusterDomain value will be added to the cluster.peer option of the alertmanager.

## Without this specified option cluster.peer will have value alertmanager-monitoring-alertmanager-0.alertmanager-operated:9094 (default value)

## With this specified option cluster.peer will have value alertmanager-monitoring-alertmanager-0.alertmanager-operated.namespace.svc.cluster-domain:9094

##

# clusterDomain: "cluster.local"

## Service account for Alertmanager to use.

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/

##

serviceAccount:

create: true

name: ""

## Configuration for Prometheus operator service

##

service:

annotations: {}

labels: {}

clusterIP: ""

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30080

nodePortTls: 30443

## Additional ports to open for Prometheus service

## ref: https://kubernetes.io/docs/concepts/services-networking/service/#multi-port-services

##

additionalPorts: []

## Loadbalancer IP

## Only use if service.type is "loadbalancer"

##

loadBalancerIP: ""

loadBalancerSourceRanges: []

## Service type

## NodePort, ClusterIP, loadbalancer

##

type: ClusterIP

## List of IP addresses at which the Prometheus server service is available

## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips

##

externalIPs: []

## Labels to add to the operator pod

##

podLabels: {}

## Annotations to add to the operator pod

##

podAnnotations: {}

## Assign a PriorityClassName to pods if set

# priorityClassName: ""

## Define Log Format

# Use logfmt (default) or json logging

# logFormat: logfmt

## Decrease log verbosity to errors only

# logLevel: error

## If true, the operator will create and maintain a service for scraping kubelets

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/helm/prometheus-operator/README.md

##

kubeletService:

enabled: true

namespace: kube-system

## Create a servicemonitor for the operator

##

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

## Scrape timeout. If not set, the Prometheus default scrape timeout is used.

scrapeTimeout: ""

selfMonitor: true

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Resource limits & requests

##

resources: {}

# limits:

# cpu: 200m

# memory: 200Mi

# requests:

# cpu: 100m

# memory: 100Mi

# Required for use in managed kubernetes clusters (such as AWS EKS) with custom CNI (such as calico),

# because control-plane managed by AWS cannot communicate with pods' IP CIDR and admission webhooks are not working

##

hostNetwork: false

## Define which Nodes the Pods are scheduled on.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

## Tolerations for use with node taints

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

# - key: "key"

# operator: "Equal"

# value: "value"

# effect: "NoSchedule"

## Assign custom affinity rules to the prometheus operator

## ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/

##

affinity: {}

# nodeAffinity:

# requiredDuringSchedulingIgnoredDuringExecution:

# nodeSelectorTerms:

# - matchExpressions:

# - key: kubernetes.io/e2e-az-name

# operator: In

# values:

# - e2e-az1

# - e2e-az2

dnsConfig: {}

# nameservers:

# - 1.2.3.4

# searches:

# - ns1.svc.cluster-domain.example

# - my.dns.search.suffix

# options:

# - name: ndots

# value: "2"

# - name: edns0

securityContext:

fsGroup: 65534

runAsGroup: 65534

runAsNonRoot: true

runAsUser: 65534

## Prometheus-operator image

##

image:

repository: quay.io/prometheus-operator/prometheus-operator

tag: v0.45.0

sha: ""

pullPolicy: IfNotPresent

## Prometheus image to use for prometheuses managed by the operator

##

# prometheusDefaultBaseImage: quay.io/prometheus/prometheus

## Alertmanager image to use for alertmanagers managed by the operator

##

# alertmanagerDefaultBaseImage: quay.io/prometheus/alertmanager

## Prometheus-config-reloader image to use for config and rule reloading

##

prometheusConfigReloaderImage:

repository: quay.io/prometheus-operator/prometheus-config-reloader

tag: v0.45.0

sha: ""

## Set the prometheus config reloader side-car CPU limit

##

configReloaderCpu: 100m

## Set the prometheus config reloader side-car memory limit

##

configReloaderMemory: 50Mi

## Set a Field Selector to filter watched secrets

##

secretFieldSelector: ""

## Deploy a Prometheus instance

##

prometheus:

enabled: true

## Annotations for Prometheus

##

annotations: {}

## Service account for Prometheuses to use.

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-service-account/

##

serviceAccount:

create: true

name: ""

# Service for thanos service discovery on sidecar

# Enable this can make Thanos Query can use

# `--store=dnssrv+_grpc._tcp.${kube-prometheus-stack.fullname}-thanos-discovery.${namespace}.svc.cluster.local` to discovery

# Thanos sidecar on prometheus nodes

# (Please remember to change ${kube-prometheus-stack.fullname} and ${namespace}. Not just copy and paste!)

thanosService:

enabled: false

annotations: {}

labels: {}

portName: grpc

port: 10901

targetPort: "grpc"

## Configuration for Prometheus service

##

service:

annotations: {}

labels: {}

clusterIP: ""

## Port for Prometheus Service to listen on

##

port: 9090

## To be used with a proxy extraContainer port

targetPort: 9090

## List of IP addresses at which the Prometheus server service is available

## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips

##

externalIPs: []

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30090

## Loadbalancer IP

## Only use if service.type is "loadbalancer"

loadBalancerIP: ""

loadBalancerSourceRanges: []

## Service type

##

type: ClusterIP

sessionAffinity: ""

## Configuration for creating a separate Service for each statefulset Prometheus replica

##

servicePerReplica:

enabled: false

annotations: {}

## Port for Prometheus Service per replica to listen on

##

port: 9090

## To be used with a proxy extraContainer port

targetPort: 9090

## Port to expose on each node

## Only used if servicePerReplica.type is 'NodePort'

##

nodePort: 30091

## Loadbalancer source IP ranges

## Only used if servicePerReplica.type is "loadbalancer"

loadBalancerSourceRanges: []

## Service type

##

type: ClusterIP

## Configure pod disruption budgets for Prometheus

## ref: https://kubernetes.io/docs/tasks/run-application/configure-pdb/#specifying-a-poddisruptionbudget

## This configuration is immutable once created and will require the PDB to be deleted to be changed

## https://github.com/kubernetes/kubernetes/issues/45398

##

podDisruptionBudget:

enabled: false

minAvailable: 1

maxUnavailable: ""

# Ingress exposes thanos sidecar outside the cluster

thanosIngress:

enabled: false

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

annotations: {}

labels: {}

servicePort: 10901

## Port to expose on each node

## Only used if service.type is 'NodePort'

##

nodePort: 30901

## Hosts must be provided if Ingress is enabled.

##

hosts: []

# - thanos-gateway.domain.com

## Paths to use for ingress rules

##

paths: []

# - /

## For Kubernetes >= 1.18 you should specify the pathType (determines how Ingress paths should be matched)

## See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#better-path-matching-with-path-types

# pathType: ImplementationSpecific

## TLS configuration for Thanos Ingress

## Secret must be manually created in the namespace

##

tls: []

# - secretName: thanos-gateway-tls

# hosts:

# - thanos-gateway.domain.com

ingress:

enabled: true

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

annotations: {}

labels: {}

## Hostnames.

## Must be provided if Ingress is enabled.

##

hosts:

- prometheus.prod.test.local

#hosts: []

## Paths to use for ingress rules - one path should match the prometheusSpec.routePrefix

##

paths:

- /

## For Kubernetes >= 1.18 you should specify the pathType (determines how Ingress paths should be matched)

## See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#better-path-matching-with-path-types

# pathType: ImplementationSpecific

## TLS configuration for Prometheus Ingress

## Secret must be manually created in the namespace

##

tls: []

# - secretName: prometheus-general-tls

# hosts:

# - prometheus.example.com

## Configuration for creating an Ingress that will map to each Prometheus replica service

## prometheus.servicePerReplica must be enabled

##

ingressPerReplica:

enabled: false

# For Kubernetes >= 1.18 you should specify the ingress-controller via the field ingressClassName

# See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#specifying-the-class-of-an-ingress

# ingressClassName: nginx

annotations: {}

labels: {}

## Final form of the hostname for each per replica ingress is

## {{ ingressPerReplica.hostPrefix }}-{{ $replicaNumber }}.{{ ingressPerReplica.hostDomain }}

##

## Prefix for the per replica ingress that will have `-$replicaNumber`

## appended to the end

hostPrefix: ""

## Domain that will be used for the per replica ingress

hostDomain: ""

## Paths to use for ingress rules

##

paths: []

# - /

## For Kubernetes >= 1.18 you should specify the pathType (determines how Ingress paths should be matched)

## See https://kubernetes.io/blog/2020/04/02/improvements-to-the-ingress-api-in-kubernetes-1.18/#better-path-matching-with-path-types

# pathType: ImplementationSpecific

## Secret name containing the TLS certificate for Prometheus per replica ingress

## Secret must be manually created in the namespace

tlsSecretName: ""

## Separated secret for each per replica Ingress. Can be used together with cert-manager

##

tlsSecretPerReplica:

enabled: false

## Final form of the secret for each per replica ingress is

## {{ tlsSecretPerReplica.prefix }}-{{ $replicaNumber }}

##

prefix: "prometheus"

## Configure additional options for default pod security policy for Prometheus

## ref: https://kubernetes.io/docs/concepts/policy/pod-security-policy/

podSecurityPolicy:

allowedCapabilities: []

allowedHostPaths: []

volumes: []

serviceMonitor:

## Scrape interval. If not set, the Prometheus default scrape interval is used.

##

interval: ""

selfMonitor: true

## scheme: HTTP scheme to use for scraping. Can be used with `tlsConfig` for example if using istio mTLS.

scheme: ""

## tlsConfig: TLS configuration to use when scraping the endpoint. For example if using istio mTLS.

## Of type: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#tlsconfig

tlsConfig: {}

bearerTokenFile:

## metric relabel configs to apply to samples before ingestion.

##

metricRelabelings: []

# - action: keep

# regex: 'kube_(daemonset|deployment|pod|namespace|node|statefulset).+'

# sourceLabels: [__name__]

# relabel configs to apply to samples before ingestion.

##

relabelings: []

# - sourceLabels: [__meta_kubernetes_pod_node_name]

# separator: ;

# regex: ^(.*)$

# targetLabel: nodename

# replacement: $1

# action: replace

## Settings affecting prometheusSpec

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#prometheusspec

##

prometheusSpec:

## If true, pass --storage.tsdb.max-block-duration=2h to prometheus. This is already done if using Thanos

##

disableCompaction: false

## APIServerConfig

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#apiserverconfig

##

apiserverConfig: {}

## Interval between consecutive scrapes.

## Defaults to 30s.

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/release-0.44/pkg/prometheus/promcfg.go#L180-L183

##

scrapeInterval: ""

## Number of seconds to wait for target to respond before erroring

##

scrapeTimeout: ""

## Interval between consecutive evaluations.

##

evaluationInterval: ""

## ListenLocal makes the Prometheus server listen on loopback, so that it does not bind against the Pod IP.

##

listenLocal: false

## EnableAdminAPI enables Prometheus the administrative HTTP API which includes functionality such as deleting time series.

## This is disabled by default.

## ref: https://prometheus.io/docs/prometheus/latest/querying/api/#tsdb-admin-apis

##

enableAdminAPI: false

## Image of Prometheus.

##

image:

repository: quay.io/prometheus/prometheus

tag: v2.24.0

sha: ""

## Tolerations for use with node taints

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

##

tolerations: []

# - key: "key"

# operator: "Equal"

# value: "value"

# effect: "NoSchedule"

## If specified, the pod's topology spread constraints.

## ref: https://kubernetes.io/docs/concepts/workloads/pods/pod-topology-spread-constraints/

##

topologySpreadConstraints: []

# - maxSkew: 1

# topologyKey: topology.kubernetes.io/zone

# whenUnsatisfiable: DoNotSchedule

# labelSelector:

# matchLabels:

# app: prometheus

## Alertmanagers to which alerts will be sent

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#alertmanagerendpoints

##

## Default configuration will connect to the alertmanager deployed as part of this release

##

alertingEndpoints: []

# - name: ""

# namespace: ""

# port: http

# scheme: http

# pathPrefix: ""

# tlsConfig: {}

# bearerTokenFile: ""

# apiVersion: v2

## External labels to add to any time series or alerts when communicating with external systems

##

externalLabels: {}

## Name of the external label used to denote replica name

##

replicaExternalLabelName: ""

## If true, the Operator won't add the external label used to denote replica name

##

replicaExternalLabelNameClear: false

## Name of the external label used to denote Prometheus instance name

##

prometheusExternalLabelName: ""

## If true, the Operator won't add the external label used to denote Prometheus instance name

##

prometheusExternalLabelNameClear: false

## External URL at which Prometheus will be reachable.

##

externalUrl: ""

## Define which Nodes the Pods are scheduled on.

## ref: https://kubernetes.io/docs/user-guide/node-selection/

##

nodeSelector: {}

## Secrets is a list of Secrets in the same namespace as the Prometheus object, which shall be mounted into the Prometheus Pods.

## The Secrets are mounted into /etc/prometheus/secrets/. Secrets changes after initial creation of a Prometheus object are not

## reflected in the running Pods. To change the secrets mounted into the Prometheus Pods, the object must be deleted and recreated

## with the new list of secrets.

##

secrets: []

## ConfigMaps is a list of ConfigMaps in the same namespace as the Prometheus object, which shall be mounted into the Prometheus Pods.

## The ConfigMaps are mounted into /etc/prometheus/configmaps/.

##

configMaps: []

## QuerySpec defines the query command line flags when starting Prometheus.

## ref: https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#queryspec

##

query: {}

## Namespaces to be selected for PrometheusRules discovery.

## If nil, select own namespace. Namespaces to be selected for ServiceMonitor discovery.

## See https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#namespaceselector for usage

##

ruleNamespaceSelector: {}

## If true, a nil or {} value for prometheus.prometheusSpec.ruleSelector will cause the

## prometheus resource to be created with selectors based on values in the helm deployment,

## which will also match the PrometheusRule resources created

##

ruleSelectorNilUsesHelmValues: true

## PrometheusRules to be selected for target discovery.

## If {}, select all ServiceMonitors

##

ruleSelector: {}

## Example which select all prometheusrules resources

## with label "prometheus" with values any of "example-rules" or "example-rules-2"

# ruleSelector:

# matchExpressions:

# - key: prometheus

# operator: In

# values:

# - example-rules

# - example-rules-2

#

## Example which select all prometheusrules resources with label "role" set to "example-rules"

# ruleSelector:

# matchLabels:

# role: example-rules

## If true, a nil or {} value for prometheus.prometheusSpec.serviceMonitorSelector will cause the

## prometheus resource to be created with selectors based on values in the helm deployment,

## which will also match the servicemonitors created

##

serviceMonitorSelectorNilUsesHelmValues: true

## ServiceMonitors to be selected for target discovery.

## If {}, select all ServiceMonitors

##

serviceMonitorSelector: {}

## Example which selects ServiceMonitors with label "prometheus" set to "somelabel"

# serviceMonitorSelector:

# matchLabels:

# prometheus: somelabel

## Namespaces to be selected for ServiceMonitor discovery.

##

serviceMonitorNamespaceSelector:

matchLabels:

prometheus: enabled

## Example which selects ServiceMonitors in namespaces with label "prometheus" set to "somelabel"

# serviceMonitorNamespaceSelector:

# matchLabels:

# prometheus: somelabel

## If true, a nil or {} value for prometheus.prometheusSpec.podMonitorSelector will cause the

## prometheus resource to be created with selectors based on values in the helm deployment,

## which will also match the podmonitors created

##

podMonitorSelectorNilUsesHelmValues: true

## PodMonitors to be selected for target discovery.

## If {}, select all PodMonitors

##

podMonitorSelector: {}

## Example which selects PodMonitors with label "prometheus" set to "somelabel"

# podMonitorSelector:

# matchLabels:

# prometheus: somelabel

## Namespaces to be selected for PodMonitor discovery.

## See https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#namespaceselector for usage

##

podMonitorNamespaceSelector: {}

## If true, a nil or {} value for prometheus.prometheusSpec.probeSelector will cause the

## prometheus resource to be created with selectors based on values in the helm deployment,

## which will also match the probes created

##

probeSelectorNilUsesHelmValues: true

## Probes to be selected for target discovery.

## If {}, select all Probes

##

probeSelector: {}

## Example which selects Probes with label "prometheus" set to "somelabel"

# probeSelector:

# matchLabels:

# prometheus: somelabel

## Namespaces to be selected for Probe discovery.

## See https://github.com/prometheus-operator/prometheus-operator/blob/master/Documentation/api.md#namespaceselector for usage

##

probeNamespaceSelector: {}

## How long to retain metrics

##

retention: 10d

## Maximum size of metrics

##

retentionSize: ""

## Enable compression of the write-ahead log using Snappy.

##

walCompression: false

## If true, the Operator won't process any Prometheus configuration changes

##

paused: false

## Number of replicas of each shard to deploy for a Prometheus deployment.

## Number of replicas multiplied by shards is the total number of Pods created.

##

replicas: 1

## EXPERIMENTAL: Number of shards to distribute targets onto.

## Number of replicas multiplied by shards is the total number of Pods created.

## Note that scaling down shards will not reshard data onto remaining instances, it must be manually moved.

## Increasing shards will not reshard data either but it will continue to be available from the same instances.

## To query globally use Thanos sidecar and Thanos querier or remote write data to a central location.

## Sharding is done on the content of the `__address__` target meta-label.

##

shards: 1

## Log level for Prometheus be configured in

##

logLevel: info

## Log format for Prometheus be configured in

##

logFormat: logfmt

## Prefix used to register routes, overriding externalUrl route.

## Useful for proxies that rewrite URLs.

##

routePrefix: /

## Standard object’s metadata. More info: https://github.com/kubernetes/community/blob/master/contributors/devel/sig-architecture/api-conventions.md#metadata

## Metadata Labels and Annotations gets propagated to the prometheus pods.

##

podMetadata: {}

# labels:

# app: prometheus

# k8s-app: prometheus

## Pod anti-affinity can prevent the scheduler from placing Prometheus replicas on the same node.

## The default value "soft" means that the scheduler should *prefer* to not schedule two replica pods onto the same node but no guarantee is provided.

## The value "hard" means that the scheduler is *required* to not schedule two replica pods onto the same node.

## The value "" will disable pod anti-affinity so that no anti-affinity rules will be configured.

podAntiAffinity: ""

## If anti-affinity is enabled sets the topologyKey to use for anti-affinity.

## This can be changed to, for example, failure-domain.beta.kubernetes.io/zone

##

podAntiAffinityTopologyKey: kubernetes.io/hostname

## Assign custom affinity rules to the prometheus instance

## ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/

##

affinity: {}

# nodeAffinity: